Searching in short-video apps is harder than it looks. People type very short queries—often just a name or a few words—so the search engine can’t always tell what they mean. For example, the same query might refer to a celebrity, a brand, or a song. A “one-size-fits-all” search engine may return results that feel wrong for that user.

This paper introduces WeWrite, a system that uses a person’s recent behavior (what they watched, searched, and even location signals) to rewrite the query into something clearer—but only when it’s truly needed.

The two big problems WeWrite solves

1) “When should we rewrite?”

Rewriting every query is risky. Sometimes the original query is already clear (like a practical search: “air fryer”). If the system rewrites that based on your entertainment history, it might drift into nonsense results. This is called intent drift.

WeWrite avoids that by learning from real user behavior logs. It looks for patterns like:

- The user searches something and quickly leaves (a sign they didn’t find what they wanted).

- Then they rewrite the query themselves and finally watch a video for a meaningful amount of time.

Those cases become “good examples” that show when rewriting actually helps.

It also collects “do not rewrite” examples where the user’s first query worked fine—so the system learns to output “reject” (meaning: keep the original query).

To filter noisy cases (like simple typos), they add checks to confirm the rewrite is actually connected to the user’s history.

2) “How should we rewrite?”

Even if the system knows rewriting is needed, it has to rewrite in a way that works with the search engine.

A common failure with AI-generated queries is producing text that is “smart” but doesn’t match anything in the search index, so it retrieves nothing (the “zero results” problem).

WeWrite trains the language model in two steps:

- Step A (supervised training): learn from the mined examples (rewrite vs reject).

- Step B (reinforcement learning style training): reward rewrites that are more “searchable” in the real system—meaning queries that resemble valid, popular queries and historically lead to clicks.

So the model doesn’t just generate “good English”—it generates queries that are useful for retrieval.

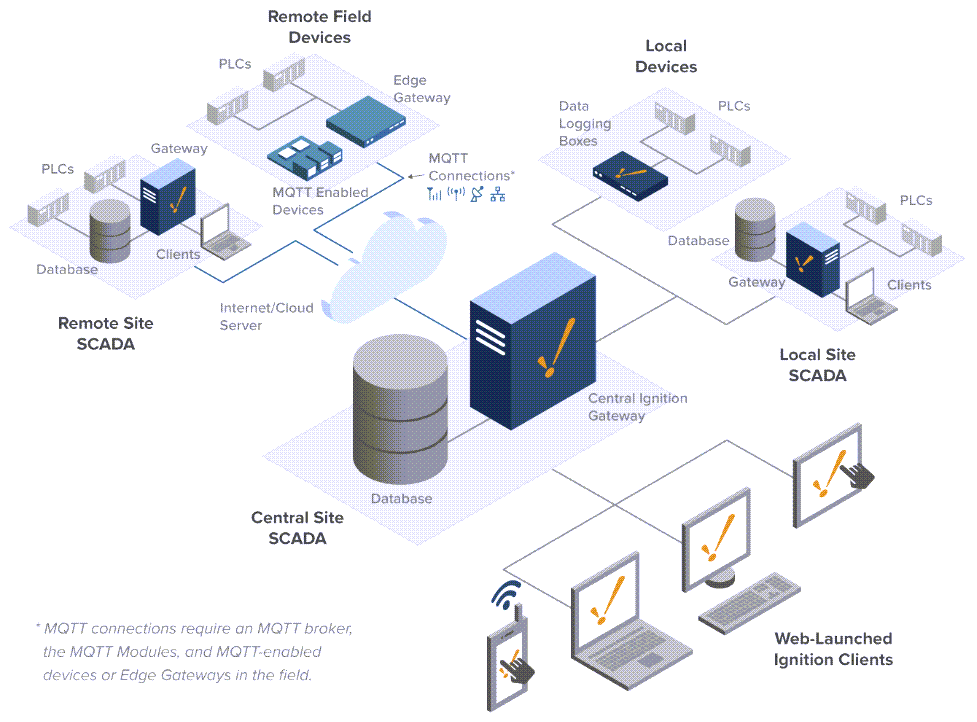

The biggest engineering challenge: latency

Large language models can be slow, and video search needs to feel instant. WeWrite solves this with a clever deployment trick called “Fake Recall”:

- The normal search runs as usual.

- In parallel, the system asks the LLM for a rewrite.

- Instead of sending that rewrite through the full heavy search pipeline, it checks a prebuilt cache/index that maps common queries to top results.

- If there’s a match, those results are filtered for relevance and merged into the final list.

Because it runs in parallel and uses a fast cache, users don’t feel extra delay.

Real-world results

In online A/B testing on a large video platform, WeWrite:

- Increased meaningful clicks/watches (watch time over 10 seconds) by 1.07%

- Reduced how often people re-typed/reformulated their query by 2.97%

Why it matters

The key idea is simple:

Personalization is powerful, but only if you know when to use it—and you can’t slow the search down.

WeWrite is a practical blueprint for making LLM-powered query rewriting work in real-time video search without breaking speed or intent.