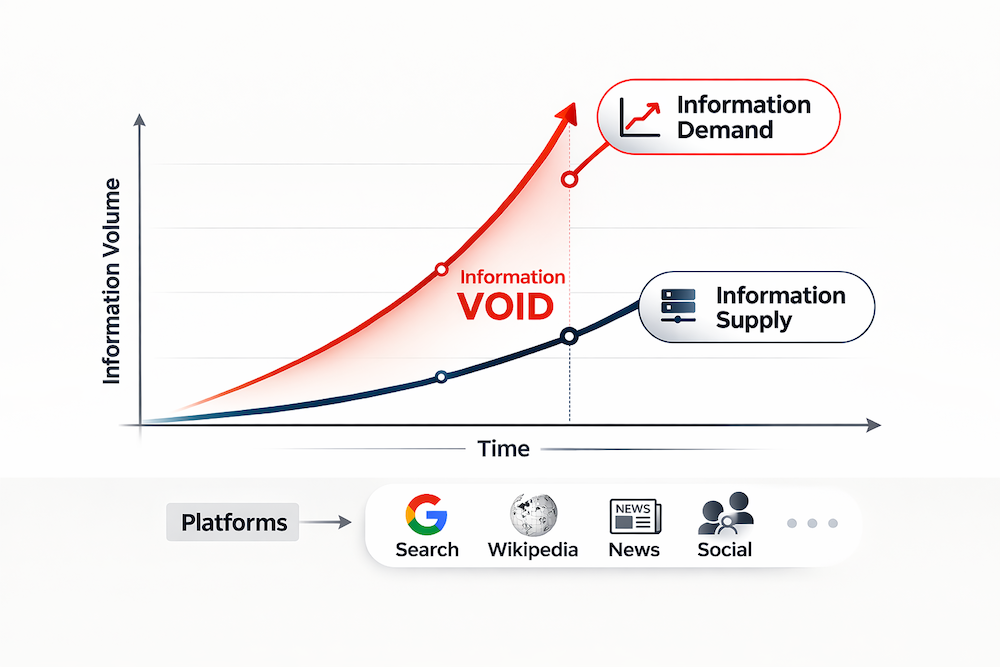

Social media and the open web run on an attention economy: people demand information (they search, read, and click) while creators and media supply information (they post, publish, and share). When those two forces fall out of sync, the internet becomes unusually risky.

This paper introduces a practical way to detect information voids—periods when public interest spikes but reliable content doesn’t keep up. In those moments, people still go looking for answers, but the ecosystem can’t “serve” enough high-quality information fast enough. The result is a gap that low-quality sources can exploit.

What is an “information void,” really?

An information void isn’t just “low content.” It’s a structural imbalance:

-

Demand is high (people are searching and reading more than usual)

-

Supply is low or lagging (not enough solid content is being produced or surfaced)

The authors also define the opposite extreme: information overabundance, when content production overwhelms demand (lots of posts/articles, relatively low attention).

Together, voids and overabundance are framed as regimes of the information ecosystem—different “states” the internet can be in at any point in time.

The core idea: measure the “gap” between supply and demand

The method is a time-series pipeline that treats the web like a market:

Demand proxies (what people want):

-

Google Trends (search interest)

-

Wikipedia page views (information-seeking behavior)

Supply proxies (what gets produced):

-

Facebook posts

-

Twitter/X posts

-

Online news volume (via GDELT)

Because these sources have different scales (Twitter can dwarf others, Wikipedia behaves differently, etc.), each time series is normalized against its own typical level. Then the authors compute a simple information delta:

supply (normalized) minus demand (normalized)

-

Negative delta → demand exceeds supply (risk of void)

-

Positive delta → supply exceeds demand (risk of overload)

How they detect “true” voids (not just normal noise)

Online attention is naturally spiky. So the pipeline doesn’t treat every dip as a crisis. Instead, it uses time-series anomaly detection:

-

Decompose the delta series into trend + seasonality + remainder

-

Focus on the remainder (the “unexpected” part)

-

Flag unusually large deviations using conservative statistical thresholds

This produces five labeled states:

-

Void

-

Lack

-

Balance

-

Abundance

-

Overabundance

The key operational win: it’s replicable (works across topics and countries) and longitudinal (tracks how risk evolves day-by-day or week-by-week).

Real-world test: COVID-19 vaccine rollout in 6 countries

The case study spans Jan 1, 2020 → Apr 30, 2021 across Denmark, France, Germany, Italy, Spain, and the UK, focusing on major vaccine brands.

Their results show that sensitive moments—like vaccine authorizations or safety scares—trigger sharp supply/demand imbalances. Importantly:

-

Information voids often persist longer than periods of overabundance.

-

The ecosystem tends to recover more slowly when demand spikes than when supply spikes.

They highlight long stretches of consecutive “void days,” suggesting that once a gap opens, it can remain open long enough for lower-quality narratives to spread.

The scary part: voids correlate with worse information quality

The authors don’t claim strict causality, but they show a strong association:

-

During voids, the share of content from highly credible sources drops

-

The share of content rated as “proceed with maximum caution” (very low credibility / misinformation-prone) increases

They validate this by matching domains shared on Facebook and Twitter with NewsGuard credibility scores. The pattern is consistent: voids are hotspots where users are more likely to encounter low-quality or misleading content.

Why this matters (beyond COVID)

This approach can be used as an early-warning system for:

-

public health crises

-

elections and political shocks

-

climate disasters

-

sudden breaking news events

-

emerging technologies and rumors

For institutions, it’s actionable: if you can detect a void forming, you can time communications (FAQs, explainer pages, press briefings) to fill the gap before misinformation dominates the search results and social feeds.

Bottom line: misinformation doesn’t only spread because people are gullible or platforms are chaotic. It can spike because the information ecosystem temporarily fails a basic job: meeting demand with trustworthy supply.

source: https://arxiv.org/pdf/2602.15476