This paper proposes a new method to forecast oil price volatility that is:

-

just as accurate as the best existing models

-

vastly faster computationally (up to 62,000× faster)

-

easier to interpret

-

scalable to large financial systems

It achieves this by combining network theory + GARCH volatility models.

Why Oil Volatility Is Hard to Predict

Oil markets are unusually complex because:

-

OPEC countries influence supply strategically

-

Geopolitics affects production

-

Global demand fluctuates

-

Countries respond differently to shocks

Traditional econometric models struggle because they assume either:

|

Model Type |

Weakness |

|---|---|

|

Standard GARCH |

Treats assets separately |

|

Multivariate GARCH |

Too computationally heavy |

|

Simple correlation networks |

Miss structural relationships |

So researchers need something that is:

✔ realistic

✔ scalable

✔ fast

✔ interpretable

Core Innovation — “GARCH-Informed Network Models”

The key idea:

Instead of guessing relationships between countries, infer them from conditional volatility correlations estimated by GARCH models.

Traditional network models often use:

-

Euclidean distance

-

Simple correlations

-

heuristic clustering

These are static and naive.

The authors instead build networks from:

-

CCC-GARCH correlations (constant)

-

DCC-GARCH correlations (dynamic)

-

GO-GARCH latent factor correlations

So the network edges encode actual economic dependence structures.

What the Model Looks Like Conceptually

Think of each country as a node:

Saudi Arabia ─ Iran ─ UAE

│. │

Nigeria ─ Libya ─ Algeria

The volatility of each country depends on:

-

its own past volatility

-

AND current volatility of connected countries

Mathematically:

volatility(i,t) = past(i) + network influence(neighbors)

This captures instantaneous spillovers, which classic time-series models miss.

Why This Is Powerful

Standard multivariate volatility models estimate huge covariance matrices repeatedly.

That causes:

-

slow runtime

-

high memory usage

-

convergence failures

The proposed method:

-

estimates only a small set of parameters

-

uses GMM instead of likelihood optimization

-

relies on fixed network weights

Result:

|

Metric |

Improvement |

|---|---|

|

Speed |

27,000–62,000× faster |

|

Memory |

~51% less |

|

Accuracy |

Equal or better |

This is rare in quantitative modeling — normally speed vs accuracy is a trade-off.

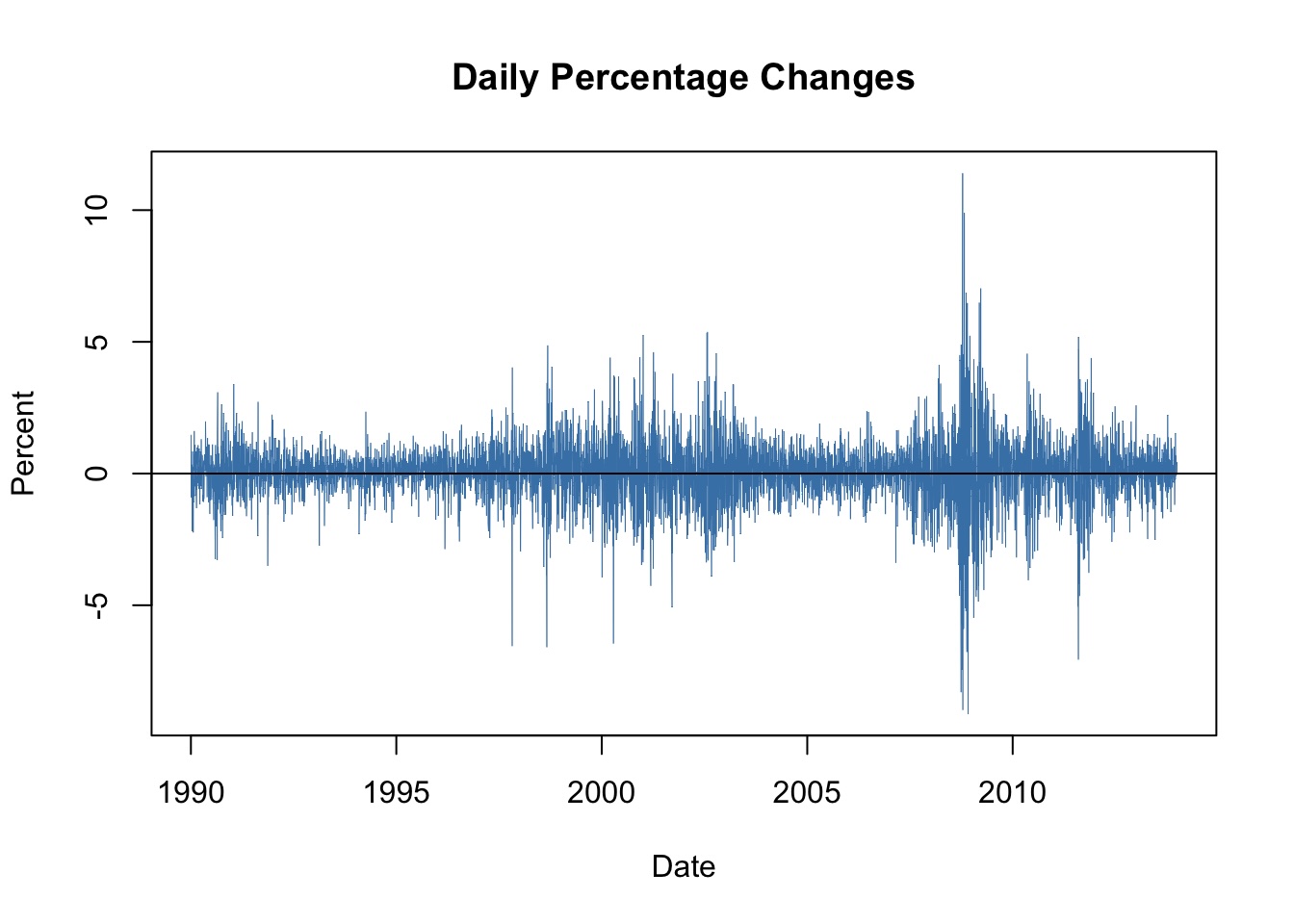

Empirical Results (Real Data Test)

Dataset:

-

Monthly oil prices

-

1983–2024

-

6 OPEC countries

They compared models using:

-

RMSFE (penalizes large errors)

-

MAFE (average absolute error)

-

Diebold-Mariano tests

-

Model Confidence Set selection

Performance Ranking (Simplified)

Best → Worst

-

Network GO-GARCH

-

Network CCC-GARCH

-

Network DCC-GARCH

-

Standard DCC-GARCH

-

Standard CCC-GARCH

-

Distance-based networks

-

Standard GO-GARCH

Important insight:

The network structure matters more than the specific GARCH model.

Economic Interpretation

The network graphs revealed structural insights about OPEC:

-

Saudi Arabia = central volatility hub

-

Gulf countries cluster together

-

African producers cluster together

-

Iran’s connections vary due to sanctions and policy shifts

So the model isn’t just predictive — it’s explanatory.

Why Policymakers Care

Better volatility forecasting helps:

-

sovereign wealth funds

-

central banks

-

commodity traders

-

risk managers

Especially for oil-dependent economies where volatility affects:

-

GDP

-

investment

-

fiscal stability

Because the model is extremely fast, it enables:

near real-time systemic risk monitoring

Methodological Contribution (Academic Perspective)

The real theoretical advance is this:

They merged three previously separate fields

|

Field |

Contribution |

|---|---|

|

Financial econometrics |

GARCH volatility modeling |

|

Network science |

interconnected systems |

|

Spatial econometrics |

spillover estimation |

This synthesis creates a new modeling class:

network-embedded volatility processes

That’s a structural innovation, not just a parameter tweak.

Main Takeaway

The paper’s central claim:

If you build networks using economically meaningful correlations instead of arbitrary similarity measures, you can get both speed and accuracy.

That’s why their models outperform traditional approaches.

source: https://arxiv.org/pdf/2507.15046