Protein science has a scale problem: databases like UniProt contain millions of protein sequences, but only a tiny fraction have reliable experimental annotations. That gap slows down everything from target discovery to protein engineering. Protein Language Models (PLMs) like ESM have helped by learning patterns from huge sequence collections—but they’re often built as “single-task experts.” Meanwhile, general Large Language Models (LLMs) can reason, explain, and answer questions—but they don’t natively understand protein sequences.

BioBridge proposes a practical middle path: keep the broad reasoning strength of an LLM while giving it real protein understanding—without forcing a full retrain from scratch or sacrificing general language ability.

The core idea: bridge two worlds (protein + language)

BioBridge combines:

-

A strong protein encoder (ESM2) to read amino-acid sequences and produce high-quality protein embeddings.

-

A projection/alignment stack that maps protein embeddings into the LLM’s semantic space, so the LLM can “attend” to protein information like it would attend to text.

-

A training strategy designed to avoid catastrophic forgetting, so the LLM doesn’t lose general reasoning skills while learning biomedical knowledge.

The result is a model that can handle both:

-

protein property prediction (function, localization, binding-related tasks, etc.), and

-

knowledge-style biological Q&A, while still staying competitive on general benchmarks.

Why this is hard (and what BioBridge targets)

The paper highlights two recurring blockers in protein + LLM fusion:

-

The knowledge barrier + forgetting problem

If you keep pretraining an LLM on biology-heavy text, it often gets “better at biology” but worse at general tasks (the classic forgetting issue). Many domain-adaptation approaches require extra “recovery tuning” afterward to restore instruction-following and general competence.

-

Modality mismatch

Protein sequences are not natural language. Their “meaning” depends on long-range interactions and biophysical constraints. Simple protein-text pairing can produce superficial alignment that doesn’t actually support biological reasoning.

BioBridge addresses both with a staged training pipeline.

Three training stages (high-level, no math)

Stage 1 — Domain-Incremental Continual Pretraining (DICP)

BioBridge starts from Qwen2.5-7B-Instruct and continues pretraining on a mixed corpus that includes:

-

biology textbooks,

-

PubMed full texts and abstracts,

-

“sequence-injected” biomedical text (where protein sequences are inserted or appended in context),

-

high-quality protein description pairs (e.g., Swiss-Prot).

To reduce forgetting, the authors mix in general reasoning data (math, code, science problem sets). Think of this as a “keep your brain sharp” replay buffer while specializing in biology.

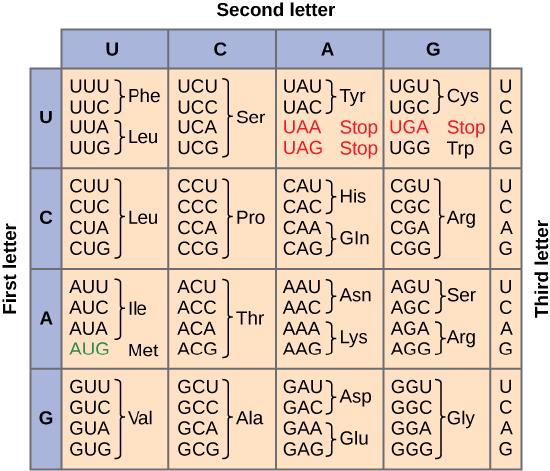

Stage 2 — Protein-text alignment (ESM2 + Q-Former + contrastive alignment)

Proteins are encoded by ESM2 (kept frozen for stability). A query-based module (Q-Former) extracts a compact set of protein features suitable for language alignment. Then BioBridge aligns these protein features with text descriptions using large-scale paired datasets (e.g., OntoProtein + Swiss-Prot pairs). This is where the model learns: “this protein pattern corresponds to that biological description.”

Stage 3 — End-to-end fine-tuning for unified use

Finally, the system is tuned end-to-end so the LLM can generate outputs grounded in protein embeddings. Importantly, the paper emphasizes that this is done without training on downstream benchmark-specific datasets, yet the model still generalizes across many tasks.

What it achieves (in plain terms)

On PFMBench (16 protein tasks across categories like Annotation, Localization, Solubility, Mutation, Interaction, Production), BioBridge performs close to specialized PLMs on key tasks. It’s particularly strong on:

-

subcellular localization (including multi-class),

-

enzyme classification (EC),

-

binding/interaction-related tasks (e.g., BindingDB, metal ion binding).

At the same time, BioBridge retains solid general-language performance. On general benchmarks like MMLU and RACE, it stays relatively close to its base LLM (Qwen2.5-7B-Instruct), and dramatically outperforms earlier protein-QA hybrids that used smaller, weaker LLM backbones.

The ablation results (what actually matters)

Two “remove one component” tests reinforce the design:

-

Remove biological continual pretraining → performance drops, even if protein embeddings are well-aligned.

Translation: the LLM still needs internal biomedical knowledge to reason well.

-

Remove the protein encoder + projector and feed raw sequences as text → performance collapses on protein tasks.

Translation: protein understanding isn’t just “reading letters”; you need a real protein representation model and a proper bridge into the LLM.

Why this is interesting for real-world bio/biotech

BioBridge is pushing toward a more useful interface: one model that can predict, explain, and answer questions, rather than separate tools for each task. That matters for:

-

faster protein annotation,

-

early-stage target prioritization,

-

better human-readable scientific explanations (useful for lab and clinical contexts),

-

eventually, tighter loops in drug discovery and protein engineering workflows.

The big takeaway: BioBridge suggests you can get PLM-level protein performance and keep LLM-level reasoning—if you treat domain adaptation and modality alignment as first-class engineering problems, not afterthoughts.

source: https://arxiv.org/pdf/2602.17680